Hello, we’re the team from NTHU (National Tsing-Hua University), Taiwan. Our team mainly focuses on the design with supporting TVM on RISC-V architecture with SIMD instructions. In this RFC, we target on the application for RISC-V P extension(RVP). This is the extension for RISC-V DSP and subword SIMD extension. Note that a preliminary version of this work is reported at RISC-V Global Forum, Sep. 3, 2020, Lightning talk session(video link).

Intro of RISC-V P extension(RVP)

RISC-V is an open source ISA with multiple extensions for different application needs. For vector computation, RISC-V provides “V” and “P” extension to support superword SIMD and subword SIMD, respectively. Here we target on RVP, it’s designed for embedded processors or DSP-like devices. All of computation use general purpose registers (32, 64 bits) with lower precision numerical such as fixed point and integer. In our previous work below at TVM conference, we give the flow for fixed-point flow. As we learn that there is a QNN flow in TVM, we devise the TVM QNN flow for RISC-V P extension. This will make our flow more compatible with existing TVM flow.

The previous work for RVP in TVM for fixed-point flow is given below.

- Supporting TVM on RISC-V Architectures with SIMD computation (video link)

The specification of RISC-V P extension is as follows.

Motivation

As we’re trying to find a friendly application that related to RVP, we found QNN as the best practice for us. Especially for pre-quantized flow from QNN dialect, most of Ops are either in int8/uint8 or int32, these are suitable to enable subword SIMD computation. As TVM upstream doesn’t have support for RISC-V in topi implement or any handling for scheduling. We want to propose our work which mainly focuses on enabling tensorization on conv2d_nchw_int8 and vectorization on Ops in int32.

Approach

Outline

- New target :

riscv_cpu - Introduce an intrinsic for dot-product in convolution, and enable it by tensorization.

- Vectorize Ops to generate SIMD pattern (add).

- Introduce a custom runtime to easily generate executable files for the Spike simulator.

- Run spike to get the result.

RISC-V Target

- register new TVM target :

riscv_cpu- using llvm as our target backend with

--mtriple=riscv64-unknown-elf --system-lib

- using llvm as our target backend with

- add

codegen_riscv.ccas RISC-V specific code generator- register for

target_riscv32andtarget_riscv64

- register for

- register strategy, specially handle schedule for

conv2d_nchw_int8with tensorize- uses x86’s compute/schedule for others

- since Spike doesn’t support parallel computing, we use an empty schedule for

schedule_injective(), except for Ops that gonna be vectorized

Intrinsic for dot product

In order to efficiently executing convolution, we propose to use the following instructions in RVP :

- smaqa : Signed Multiply Four Bytes with 32-bit Adds

- smaqa.su : Signed and Unsigned Multiply Four Bytes with 32-bit Adds

- with

helper_change_dtypes_to_uint8_int8()from x86 legalize flow, we can get uint8 x int8

- with

Instructions above accumulate the product into 32-bits directly, it save the effort to save temp result as 16-bits, also preserve the accuracy compared with using SIMD Mul with 8-bits. The int32_lanes is fixed as 2 since maximum length of a register in RVP is 64-bits. This is done in one instruction and with plenty of subword parallelism.

Intrinc func is delcared as :

# num_int8_elements = 4

# int32_lanes = 2

def _intrin_func(ins, outs):

def _instr(index):

ib = tvm.tir.ir_builder.create()

if index == 1:

ib.emit(outs[0].vstore(0, tvm.tir.const(0, 'int32x%d' % (int32_lanes))))

return ib.get()

dtype_a = '%s8x%d' % (data_dtype, num_int8_elements)

dtype_b = '%s8x%d' % (kernel_dtype, int32_lanes * num_int8_elements)

dtype_c = 'int32x%d' % (int32_lanes)

a_int8 = ins[0].vload([0], dtype_a)

re_int32 = tvm.tir.call_intrin('int32', 'tir.reinterpret', a_int8)

vec_ai32 = re_int32.astype(dtype_c)

vec_a = tvm.tir.call_intrin(dtype_b, 'tir.reinterpret', vec_ai32)

vec_b = ins[1].vload([0, 0], dtype_b)

# Call intrinsic for RVP

d_dtype = 's' if data_dtype == 'int' else 'u'

k_dtype = 's' if kernel_dtype == 'int' else 'u'

if d_dtype == 'u' and k_dtype == 's':

inst = 'llvm.riscv.simd.%s%sdot.v%di32' % (

k_dtype, d_dtype, int32_lanes)

vdot = tvm.tir.call_llvm_pure_intrin(dtype_c,

inst,

tvm.tir.const(0, 'uint32'),

vec_b, vec_a)

else:

inst = 'llvm.riscv.simd.%s%sdot.v%di32' % (

d_dtype, k_dtype, int32_lanes)

vdot = tvm.tir.call_llvm_pure_intrin(dtype_c,

inst,

tvm.tir.const(0, 'uint32'),

vec_a, vec_b)

if index == 0:

ib.emit(outs[0].vstore(0, vdot))

else:

ib.emit(outs[0].vstore(0, vdot + outs[0].vload([0], 'int32x%d' % (int32_lanes))))

return ib.get()

# body, reset, update

return _instr(0), _instr(1), _instr(2)

Vectorization

In addition to enable tensorization for convolution, we can also improve the performance for other Ops by vectorizing. For example, most of add in pre-quantized model are in int32, we can vectorize it with lanes 2 to utilize SIMD instructions like kadd32(SIMD 32-bit Signed Saturating Addition).

# python/tvm/topi/riscv_cpu/injective.py

# schedule_injective(), check if op is `add`

A = op.output(0)

if op.input_tensors[0].dtype == 'int32' and op.input_tensors[1].dtype == 'int32':

if A.shape[-1] % 2 == 0:

o, i = s[A].split(A.op.axis[-1], 2)

s[A].vectorize(i)

We’re also considering to enable vectorize for other Ops. All the other works are in progerss (SIMD Mul, Max ..).

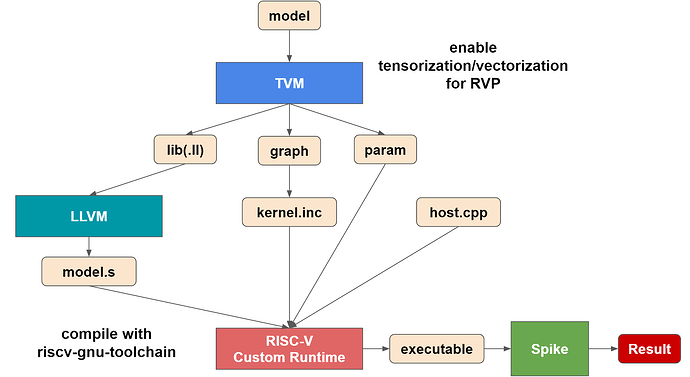

RISC-V Custom Runtime (RISC-V DLR)

As we’re trying to use TVM’s LLVM backend to generate implementation of the model, and plan to run it on Spike. We need a corresponding LLVM for generating correct assembly and then write a C++ code to calling it (including set input, run, and get output). In the end, we need to compile this program with riscv-gnu-toolchain. To get a minimal runtime for such an environment, we use a custom runtime which is fetched from TVM GraphRuntime with extra features. We remake a C++ interface to invoke the GraphRuntime function, thus making it possible to make the input of runtime be clean and directly usable after relay.build. We found this concept is quite similar with bundle_deploy, and we’re currently looking for some advice on which flow we should follow or possible approach to reuse.

Thus, for this C++ code, we need..

- The output after TVM’s

relay.build:.graph,.params - with data/label read function in C++

- calling a custom interface to invoke function in GraphRuntime

- sample code

host.cppat here- this file do the similar behavior as

demo_static.ccin bundle_deploy

- this file do the similar behavior as

In this flow, we use a build() function which collect the needed information from .graph to generate a kernel.inc file. In this file, the order and the function to be called is presented.

Following is the example of kernel.inc:

// kernel.inc

extern "C" int32_t fused_transpose(void* args, void* arg_type_ids, int32_t num_args);

extern "C" int32_t fused_nn_conv2d_7(void* args, void* arg_type_ids, int32_t num_args);

extern "C" int32_t fused_nn_bias_add_5(void* args, void* arg_type_ids, int32_t num_args);

extern "C" int32_t fused_nn_relu_4(void* args, void* arg_type_ids, int32_t num_args);

// ...

void dlr::DLR::Runx()

{

int32_t ret;

ret = fused_transpose(opa[0].values, opa[0].tcodes, opa[0].num);

assert(ret == 0);

ret = fused_nn_conv2d_7(opa[1].values, opa[1].tcodes, opa[1].num);

assert(ret == 0);

ret = fused_nn_bias_add_5(opa[2].values, opa[2].tcodes, opa[2].num);

assert(ret == 0);

ret = fused_nn_relu_4(opa[3].values, opa[3].tcodes, opa[3].num);

// ...

}

This Runx() is similar with tvm_runtime_run() from bundle_deploy. The other functions like set_input(), get_output() is also provided in this runtime.

Execute

Once we prepare the build_model.py, host.cpp, we can simply run it and execute on Spike. The example is provided at here.

Overview

Evaluation

With evaluting on Spike, the only metric we can compare is instruction count for either each Ops or entire model. Thus, we compare the instruction count for the entire model between pre-quantized model with tensorization/vectorization and model in FP32. The models are downloaded from TFLite host models.

The following table shows the instruction count for the enire model :

| Model name | Pre-quantized with tensorization/vectorization | FP32 | SpeedUp |

|---|---|---|---|

| Mobilenet_v1 | 3763660304 | 804941581 | 4.67 |

| Mobilenet_v2 | 2384447853 | 686688571 | 3.47 |

| Inception_v3 | 39933768309 | 4909041142 | 8.14 |

| Inception_v4 | 92224223497 | 10434567078 | 8.83 |

Related project

LLVM

For matching with the intrinsic we designed and called in TVM, we need a LLVM which handles RISC-V P extension properly. Since there doesn’t have an official version so far. We implemented it by ourselves with v9.0.0., please refer to project in github.

RISC-V Custom Runtime (RISC-V DLR)

As mentioned before, this is still an alternative approach and optimizable (we believe it is). Our team is still working on this part for making it more flexible and clean. We’re also looking for a better idea, please leave some comments for it. As our need for evaluation is only on Spike on our devices. We didn’t consider any constraint about remote/host. Once you create an executable file from this runtime, you can immediately run it by spike to get the result(without going through OpenOCD flow). The project is open source at here. The details and the building step is described in README.

riscv-gnu-toolchain & Spike

Both binutils and Spike(riscv-isa-sim) currently don’t support the RISC-V P extension officially. Thus, we implement it and add instructions that may be used in this flow. Please refer to the project below.

- riscv-binutils : GitHub - nthu-pllab/riscv-binutils-rvp: RISC-V binutils with P extension support for TVM QNN

- riscv-isa-sim : GitHub - nthu-pllab/riscv-isa-sim: Spike with P extension support for TVM QNN.

Next step

We plan to collect the comments from the community and reorganize our code after, then the PR will be sent.