Hi all,

I read the documentation and also the official TVM guides on compiling ONNX models, but I’m still having trouble with getting my ONNX model to compile with TVM. I created an ONNX model trained on breast cancer data. Here is the code:

import onnx

import numpy as np

import tvm

from tvm import te

import tvm.relay as relay

import mlflow

# Load a previously converted model. This model was converted from skl to ONNX with Hummingbird.

onnx_model = mlflow.onnx.load_model("\onnx_model")

ml_model = mlflow.models.Model.load("\onnx_model")

input_example = ml_model.load_input_example("\onnx_model")

# Code below is from TVM ONNX example in their official docs

# https://tvm.apache.org/docs/how_to/compile_models/from_onnx.html#compile-the-model-with-relay

target = "llvm"

input_name = "1"

shape_dict = {input_name: input_example}

# Line below throws errors about the shape

mod, params = relay.frontend.from_onnx(onnx_model, shape_dict)

with tvm.transform.PassContext(opt_level=1):

executor = relay.build_module.create_executor(

"graph", mod, tvm.cpu(0), target, params

).evaluate()

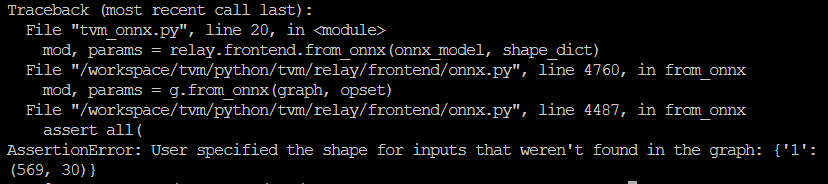

and I get this error:

I’m a bit confused at the shape dictionary and what to make my shape dictionary? Thanks so much for your time, Chloe