Hi friends:

Currently I am working on a project which needs me to combine tensorrt and ansor tune to boost inference performance of a model. What I am doing is that I firstly ansor tune the model and saved all of ansor tune log in a json file called best_ansor_tune.json. Then, I use the following codes to combine tensorrt and ansor tune result and hope I can get better result:

mod, config = partition_for_tensorrt(mod, params, remove_no_mac_subgraphs=True)

config['remove_no_mac_subgraphs'] = True

with tvm.transform.PassContext(opt_level=3, config={"relay.ext.tensorrt.options": config}):

mod = tensorrt.prune_tensorrt_subgraphs(mod)

# exit(1)

from tvm.relay.backend import compile_engine

compile_engine.get().clear()

with auto_scheduler.ApplyHistoryBest(log_file):

with tvm.transform.PassContext(opt_level=3, config={'relay.ext.tensorrt.options': config, "relay.backend.use_auto_scheduler": True}):

if debug_mode == 1:

json, lib, param = relay.build(mod, target=target, params=params)

else:

lib= relay.build(mod, target=target, params=params)

lib.export_library('deploy_trt_ansor_640_640_new.so')

but I got some message which says that some workloads cannot be found, which confuses me. I used the same method to try another model which didn’t show any warning message (such as workloads cannot be found). Did someone know what’s wrong with it? Or, do you know how to combine ansor tune and byoc tensorrt correctly?

You should do it in reverse way: first partition the graph with TRT and then tune the unoffloaded parts using Ansor, because partitioning with TRT will change the graph structure and result in different tuning tasks.

Specifically, you should use the module after this line to extract tuning tasks:

mod = tensorrt.prune_tensorrt_subgraphs(mod)

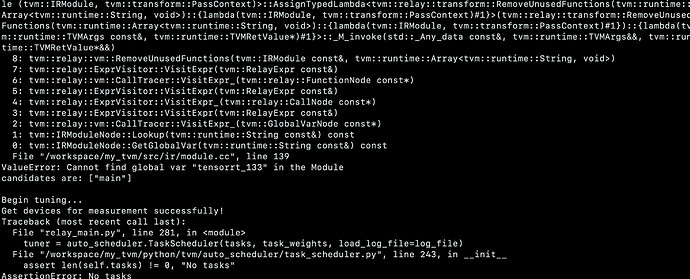

Hi, thank you for your response, I tried the method you mentioned, but got error above. Do you know the reason?

Here is my core codes of doing ansor tune and byoc trt:

# step 1: partition graph

mod, config = partition_for_tensorrt(mod, params, remove_no_mac_subgraphs=True)

with tvm.transform.PassContext(opt_level=3, config={"relay.ext.tensorrt.options": config}):

mod = tensorrt.prune_tensorrt_subgraphs(mod)

# step2: ansor tune:

tasks, task_weights = auto_scheduler.extract_tasks(mod["main"], params, target)

for idx, task in enumerate(tasks):

print("========== Task %d (workload key: %s) ==========" % (idx, task.workload_key))

print(task.compute_dag)

print("Begin tuning...")

measure_ctx = auto_scheduler.LocalRPCMeasureContext(repeat=1, min_repeat_ms=300, timeout=10)

if os.path.isfile(log_file):

tuner = auto_scheduler.TaskScheduler(tasks, task_weights, load_log_file=log_file)

else:

tuner = auto_scheduler.TaskScheduler(tasks, task_weights)

tune_option = auto_scheduler.TuningOptions(

num_measure_trials=20000, # change this to 20000 to achieve the best performance

runner=measure_ctx.runner,

measure_callbacks=[auto_scheduler.RecordToFile(log_file)],

)

tuner.tune(tune_option)

# step3: combine tensorrt and ansor tune to build model

print("compiling ... ")

with auto_scheduler.ApplyHistoryBest(log_file):

with tvm.transform.PassContext(opt_level=3, config={'relay.ext.tensorrt.options': config, "relay.backend.use_auto_scheduler": True}):

lib= relay.build(mod, target=target, params=params)

lib.export_library('deploy_trt_ansor.so')

It just means all tunable tasks are offloaded to the TRT, so Ansor won’t help improve the model performance in this case.

Oh, I see. Is there some way to choose a subgraph which will be used in byoc tensorrt, and rest of the graph will be used by ansor tune?

Unfortunately we have no way to do so yet.

1 Like

Hi , I have just met the same problem as yours. You should use mod instead of mod['main'] in extract_tasks`.

tasks, task_weights = auto_scheduler.extract_tasks(mod, params, target)

Because the global var generated by tensorrt is out of the main function.