Hi everyone,

I’m recently trying to run mlc.ai course about optimization using ms.tune_tir (6.1. Part 1 — Machine Learing Compilation 0.0.1 documentation).

Here is my source code:

import tvm

from tvm.ir.module import IRModule

from tvm.script import tir as T, relax as R

from tvm import relax

from tvm import meta_schedule as ms

import numpy as np

target="nvidia/geforce-rtx-3060"

dev = tvm.cuda(0)

@tvm.script.ir_module

class MyModuleMatmul:

@T.prim_func

def main(A: T.Buffer((1024, 1024), "float32"),

B: T.Buffer((1024, 1024), "float32"),

C: T.Buffer((1024, 1024), "float32")) -> None:

T.func_attr({"global_symbol": "main", "tir.noalias": True})

for i, j, k in T.grid(1024, 1024, 1024):

with T.block("C"):

vi, vj, vk = T.axis.remap("SSR", [i, j, k])

with T.init():

C[vi, vj] = 0.0

C[vi, vj] = C[vi, vj] + A[vi, vk] * B[vk, vj]

sch_tuned = ms.tune_tir(

mod=MyModuleMatmul,

target=target,

max_trials_global=64,

num_trials_per_iter=64,

work_dir="./tune_tmp_66",

)

sch = ms.tir_integration.compile_tir(sch_tuned, MyModuleMatmul, target)

rt_mod = tvm.build(sch.mod, target=target)

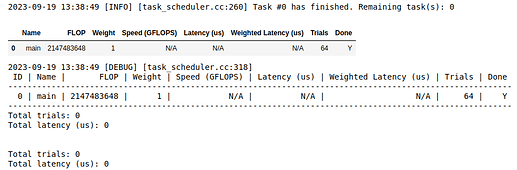

The tune_tir step creates database_tuning_record.json and database_workload.json in the work_dir.

But when running the compile_tir step, the sch becomes NoneType. So when building the module it produced the following error message:

AttributeError: ‘NoneType’ object has no attribute ‘mod’

Why did the sch become NoneType, how can I solve the problem? Is the tuning process not run completely?

Thank you for your assistance.