-

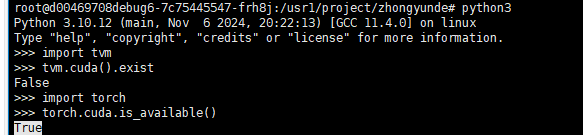

I get different results for tvm.cuda().exist and torch.cuda.is_available(), so maybe the tvm is not enable cuda installed by pip3 install apache-tvm ?

-

The reason you got this error because you are using the TVM Unity pre-built wheel for the CPU

But it still shows USE_CUDA: OFF after pip install mlc-ai-nightly-cu122 python3 -c “import tvm; print(‘\n’.join(f’{k}: {v}’ for k, v in tvm.support.libinfo().items()))” | grep CUDA

USE_CUDA: OFF USE_GRAPH_EXECUTOR_CUDA_GRAPH: OFF CUDA_VERSION: NOT-FOUND

refer to: [Bug] compilation for CUDA fails on Linux with CUDA 11.8 · Issue #339 · mlc-ai/mlc-llm · GitHub