I tested the DefaultGPUSchedule(),meta_schedule and the dlight module, which was cool but only for TensorIR Function, not for Relax Function. Is there any API or Pass which could map Pure Relax IRModule to intrinsic TE(use Relax frontend, not with relay_translator.from_relay()).

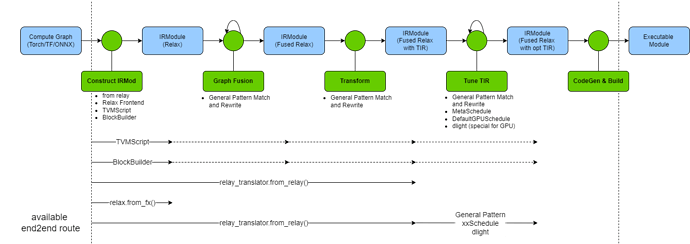

there is a draft I have made, and an end2end module load->transform->build->run pipeline is what I needed.

If we load Torch/ONNX model to relax by first to relay and transform with “relay_translator.from_relay()”, we get a Relax IRModule with TensorIR function.

but if we directly load Torch fx module with from_fx() to Relax, all ops are mapped to Relax ops, which can not be built because there is no schedule in Relax ops, we have to map it to TE by bb.emit_te() manually. I have found several Transform Pass which did it in MLC-LLM, but which is project-specific,not general in TVM repo.

mod_deploy = mlc_llm.dispatch.DispatchTIROperatorAdreno()( # pylint: disable=not-callable

mod_deploy

)

mod_deploy = relax.transform.MetaScheduleApplyDatabase()(mod_deploy)

mod_deploy = (

mlc_llm.dispatch.DispatchTIROperator( # pylint: disable=not-callable

args.model_category

)(mod_deploy)

)

mod_deploy = tvm.tir.transform.DefaultGPUSchedule()(mod_deploy)

mod_deploy = mlc_llm.transform.LiftTIRGlobalBufferAlloc()(mod_deploy)

mod_deploy = tvm.tir.transform.ForceNarrowIndexToInt32()(mod_deploy)

If we Load Compute-Graph with Relax frontend, and want to build it for run, Dose we have to do the “Relax → TensorIR” work(such as implement a nonofficial LowerToTensorIR())? or there is any other solution?

Known Info: