My proposal is now implemented.

I ended up completely replacing the content of graph_plan_memory.cc with a python implementation:

- Redirect to Python: tvm/src/relay/backend/graph_plan_memory.cc at e9184d948edd58635e79c3f21355f2b83b361401 · tum-ei-eda/tvm · GitHub

- Main implementation: tvm/python/tvm/relay/memplan.py at tumeda_memplan · tum-ei-eda/tvm · GitHub

- Support code: tvm/python/tvm/relay/memopt at tumeda_memplan · tum-ei-eda/tvm · GitHub

To work with the data-flow graph more conveniently this adds networkx as dependency. For the optimal solution, this would drag in the optimizer OR-Tools as well. The code is currently also pretty filled with debug functionality that might not be wanted by TVM.

How to contribute this back?

- I have started a PR to add the possibility to return offsets for buffers: memory planning: add offset to planning output and respect it in graph executor by rafzi · Pull Request #8134 · apache/tvm · GitHub

- Suggestion: Add the TFLM inspired heuristic as default and leave the optimized version as external extension that plugs into an interface? That would drop a lot of code and could be implemented without pretty much anything in the memopt folder.

- How should the interface to a memory planning implementation look? How about a registered function that is called only if it exists? Are there any similar optional extension interfaces?

- Any other suggestions?

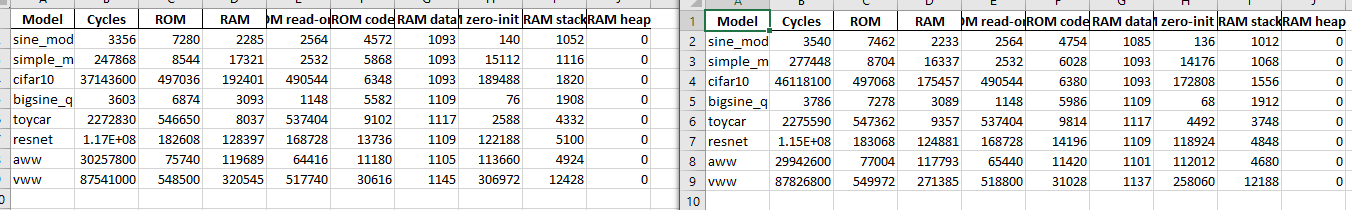

First results are shown below. This is evaluated on RISC-V and using the code generator. There still might be some issue, because I would not expect the RAM usage to rise in any example. But looks promising so far with 10% reduction for a cifar10 model and 18% for resnet!